As prospects migrate to community materials primarily based on Digital Extensible Native Space Community/Ethernet Digital Non-public Community (VXLAN/EVPN) expertise, questions concerning the implications for utility efficiency, High quality of Service (QoS) mechanisms, and congestion avoidance usually come up. This weblog publish addresses a number of the widespread areas of confusion and concern, and touches on just a few greatest practices for maximizing the worth of utilizing Cisco Nexus 9000 switches for Knowledge Middle cloth deployments by leveraging the accessible Clever Buffering capabilities.

What Is the Clever Buffering Functionality in Nexus 9000?

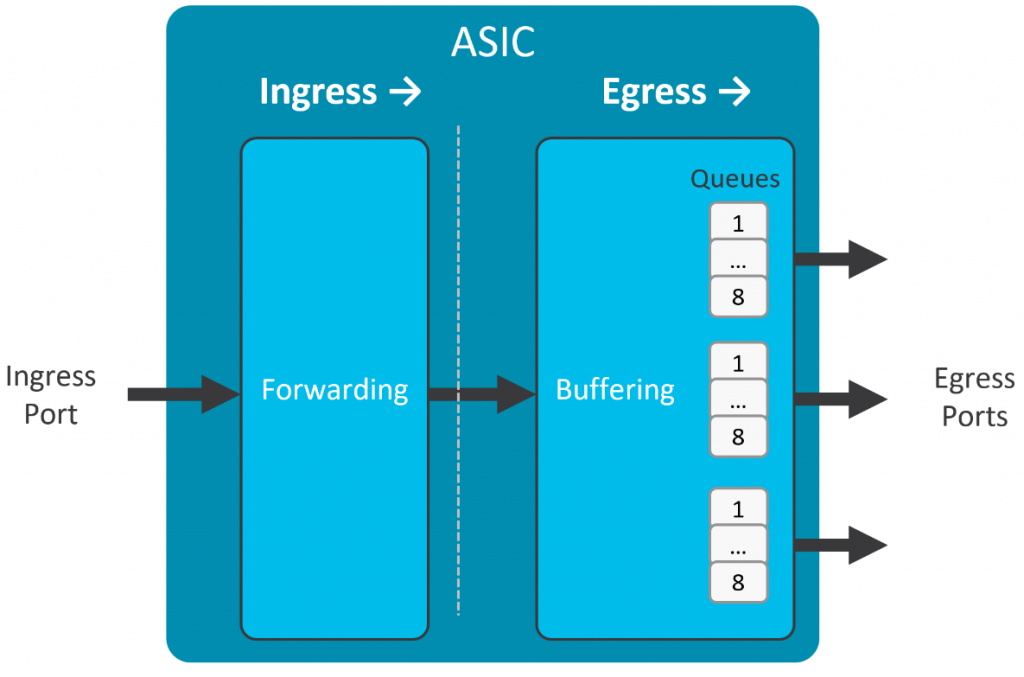

Cisco Nexus 9000 sequence switches implement an egress-buffered shared-memory structure, as proven in Determine 1. Every bodily interface has 8 user-configurable output queues that contend for shared buffer capability when congestion happens. A buffer admission algorithm known as Dynamic Buffer Safety (DBP), enabled by default, ensures truthful entry to the accessible buffer amongst any congested queues.

Along with DBP, two key options – Approximate Truthful Drop (AFD) and Dynamic Packet Prioritization (DPP) – assist to hurry preliminary circulate institution, cut back flow-completion time, keep away from congestion buildup, and keep buffer headroom for absorbing microbursts.

AFD makes use of in-built {hardware} capabilities to separate particular person 5-tuple flows into two classes – elephant flows and mouse flows:

- Elephant flows are longer-lived, sustained bandwidth flows that may profit from congestion management indicators equivalent to Express Congestion Notification (ECN) Congestion Skilled (CE) marking, or random discards, that affect the windowing conduct of Transmission Management Protocol (TCP) stacks. The TCP windowing mechanism controls the transmission charge of TCP classes, backing off the transmission charge when ECN CE markings, or un-acknowledged sequence numbers, are noticed (see the “Extra Info” part for added particulars).

- Mouse flows are shorter-lived flows which are unlikely to profit from TCP congestion management mechanisms. These flows include the preliminary TCP 3-way handshake that establishes the session, together with a comparatively small variety of extra packets, and are subsequently terminated. By the point any congestion management is signaled for the circulate, the circulate is already full.

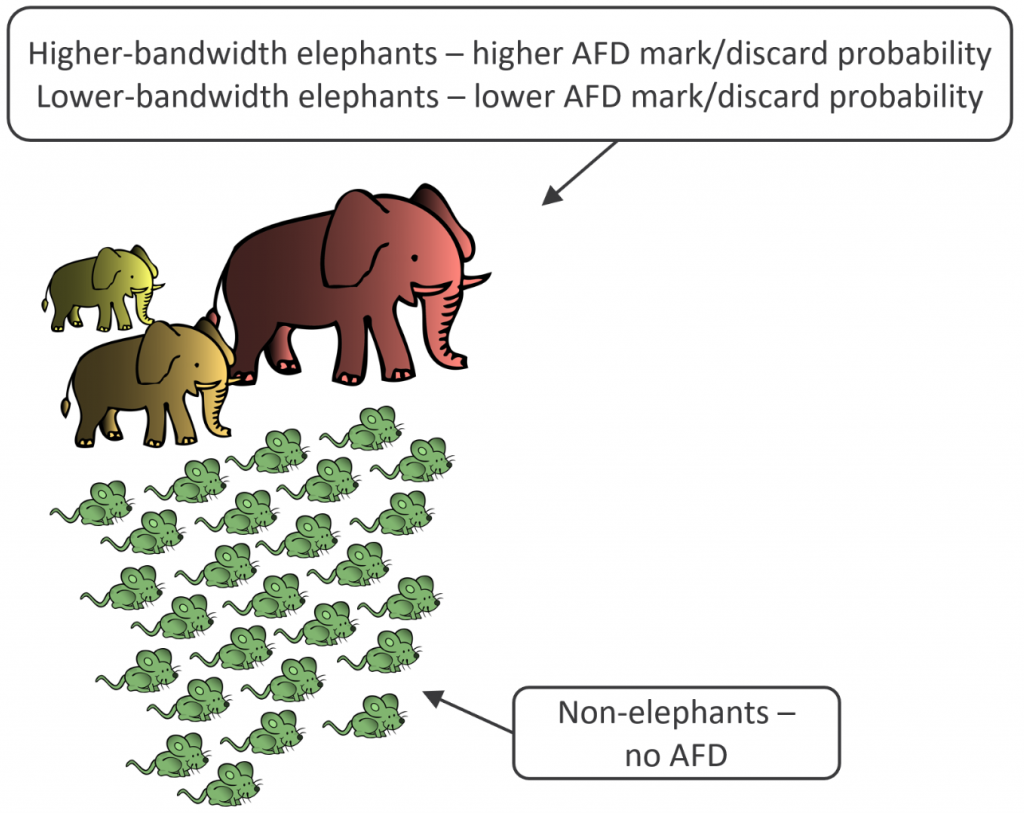

As proven in Determine 2, with AFD, elephant flows are additional characterised in line with their relative bandwidth utilization – a high-bandwidth elephant circulate has a better chance of experiencing ECN CE marking, or discards, than a lower-bandwidth elephant circulate. A mouse circulate has a zero chance of being marked or discarded by AFD.

For readers aware of the older Weighted Random Early Detect (WRED) mechanism, you’ll be able to consider AFD as a form of “bandwidth-aware WRED.” With WRED, any packet (no matter whether or not it’s a part of a mouse circulate or an elephant circulate) is probably topic to marking or discards. In distinction, with AFD, solely packets belonging to sustained-bandwidth elephant flows could also be marked or discarded – with higher-bandwidth elephants extra more likely to be impacted than lower-bandwidth elephants – whereas a mouse circulate isn’t impacted by these mechanisms.

Moreover, AFD marking or discard chance for elephants will increase because the queue turns into extra congested. This conduct ensures that TCP stacks again off properly earlier than all of the accessible buffer is consumed, avoiding additional congestion and making certain that plentiful buffer headroom nonetheless stays to soak up instantaneous bursts of back-to-back packets on beforehand uncongested queues.

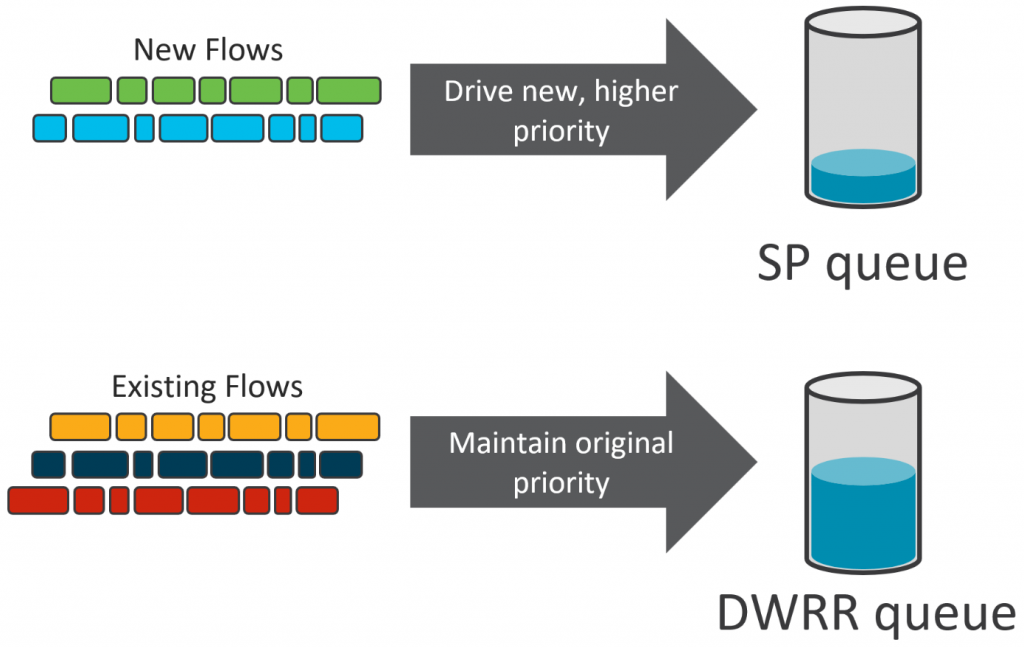

DPP, one other hardware-based functionality, promotes the preliminary packets in a newly noticed circulate to a better precedence queue than it could have traversed “naturally.” Take for instance a brand new TCP session institution, consisting of the TCP 3-way handshake. If any of those packets sit in a congested queue, and due to this fact expertise extra delay, it might materially have an effect on utility efficiency.

As proven in Determine 3, as an alternative of enqueuing these packets of their initially assigned queue, the place congestion is probably extra doubtless, DPP will promote these preliminary packets to a higher-priority queue – a strict precedence (SP) queue, or just a higher-weighted Deficit Weighted Spherical-Robin (DWRR) queue – which leads to expedited packet supply with a really low probability of congestion.

If the circulate continues past a configurable variety of packets, packets are now not promoted – subsequent packets within the circulate traverse the initially assigned queue. In the meantime, different newly noticed flows could be promoted and revel in the advantage of quicker session institution and circulate completion for short-lived flows.

AFD and UDP Visitors

One ceaselessly requested query about AFD is that if it’s acceptable to make use of it with Person Datagram Protocol (UDP) site visitors. AFD by itself doesn’t distinguish between completely different protocol sorts, it solely determines if a given 5-tuple circulate is an elephant or not. We usually state that AFD shouldn’t be enabled on queues that carry non-TCP site visitors. That’s an oversimplification, after all – for instance, a low-bandwidth UDP utility would by no means be topic to AFD marking or discards as a result of it could by no means be flagged as an elephant circulate within the first place.

Recall that AFD can both mark site visitors with ECN, or it might discard site visitors. With ECN marking, collateral injury to a UDP-enabled utility is unlikely. If ECN CE is marked, both the applying is ECN-aware and would alter its transmission charge, or it could ignore the marking utterly. That stated, AFD with ECN marking received’t assist a lot with congestion avoidance if the UDP-based utility will not be ECN-aware.

Then again, in case you configure AFD in discard mode, sustained-bandwidth UDP functions might endure efficiency points. UDP doesn’t have any inbuilt congestion-management mechanisms – discarded packets would merely by no means be delivered and wouldn’t be retransmitted, not less than not primarily based on any UDP mechanism. As a result of AFD is configurable on a per-queue foundation, it’s higher on this case to easily classify site visitors by protocol, and be sure that site visitors from high-bandwidth UDP-based functions at all times makes use of a non-AFD-enabled queue.

What Is a VXLAN/EVPN Material?

VXLAN/EVPN is among the quickest rising Knowledge Middle cloth applied sciences in current reminiscence. VXLAN/EVPN consists of two key parts: the data-plane encapsulation, VXLAN; and the control-plane protocol, EVPN.

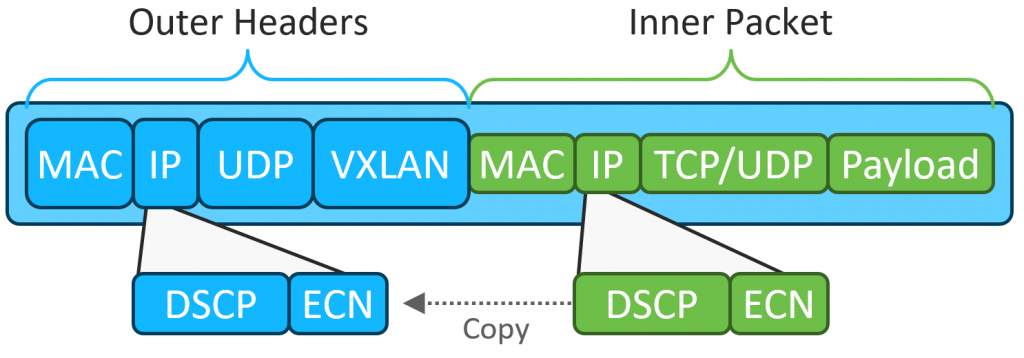

You’ll find plentiful particulars and discussions of those applied sciences on cisco.com, in addition to from many different sources. Whereas an in-depth dialogue is outdoors the scope of this weblog publish, when speaking about QOS and congestion administration within the context of a VXLAN/EVPN cloth, the data-plane encapsulation is the main focus. Determine 4 illustratates the VXLAN data-plane encapsulation, with emphasis on the interior and outer DSCP/ECN fields.

As you’ll be able to see, VXLAN encapsulates overlay packets in IP/UDP/VXLAN “outer” headers. Each the interior and outer headers include the DSCP and ECN fields.

With VXLAN, a Cisco Nexus 9000 change serving as an ingress VXLAN tunnel endpoint (VTEP) takes a packet originated by an overlay workload, encapsulates it in VXLAN, and forwards it into the material. Within the course of, the change copies the interior packet’s DSCP and ECN values to the outer headers when performing encapsulation.

Transit gadgets equivalent to cloth spines ahead the packet primarily based on the outer headers to achieve the egress VTEP, which decapsulates the packet and transmits it unencapsulated to the ultimate vacation spot. By default, each the DSCP and ECN fields are copied from the outer IP header into the interior (now decapsulated) IP header.

Within the means of traversing the material, overlay site visitors might move by means of a number of switches, every implementing QOS and queuing insurance policies outlined by the community administrator. These insurance policies would possibly merely be default configurations, or they might include extra complicated insurance policies equivalent to classifying completely different functions or site visitors sorts, assigning them to distinctive lessons, and controlling the scheduling and congestion administration conduct for every class.

How Do the Clever Buffer Capabilities Work in a VXLAN Material?

Provided that the VXLAN data-plane is an encapsulation, packets traversing cloth switches include the unique TCP, UDP, or different protocol packet inside a IP/UDP/VXLAN wrapper. Which results in the query: how do the Clever Buffer mechanisms behave with such site visitors?

As mentioned earlier, sustained-bandwidth UDP functions might probably endure from efficiency points if traversing an AFD-enabled queue. Nevertheless, we should always make a really key distinction right here – VXLAN is not a “native” UDP utility, however fairly a UDP-based tunnel encapsulation. Whereas there isn’t any congestion consciousness on the tunnel stage, the unique tunneled packets can carry any form of utility site visitors –TCP, UDP, or nearly every other protocol.

Thus, for a TCP-based overlay utility, if AFD both marks or discards a VXLAN-encapsulated packet, the unique TCP stack nonetheless receives ECN marked packets or misses a TCP sequence quantity, and these mechanisms will trigger TCP to cut back the transmission charge. In different phrases, the unique objective continues to be achieved – congestion is prevented by inflicting the functions to cut back their charge.

Equally, high-bandwidth UDP-based overlay functions would reply simply as they might to AFD marking or discards in a non-VXLAN atmosphere. When you’ve got high-bandwidth UDP-based functions, we suggest classifying primarily based on protocol and making certain these functions get assigned to non-AFD-enabled queues.

As for DPP, whereas TCP-based overlay functions will profit most, particularly for preliminary flow-setup, UDP-based overlay functions can profit as properly. With DPP, each TCP and UDP short-lived flows are promoted to a better precedence queue, rushing flow-completion time. Subsequently, enabling DPP on any queue, even these carrying UDP site visitors, ought to present a constructive affect.

Key Takeaways

VXLAN/EVPN cloth designs have gained vital traction in recent times, and making certain wonderful utility efficiency is paramount. Cisco Nexus 9000 Collection switches, with their hardware-based Clever Buffering capabilities, be sure that even in an overlay utility atmosphere, you’ll be able to maximize the environment friendly utilization of accessible buffer, decrease community congestion, pace flow-establishment and flow-completion instances, and keep away from drops as a consequence of microbursts.

Extra Info

You’ll find extra details about the applied sciences mentioned on this weblog at www.cisco.com:

Share: